Tapping AI for SEO

by Bob Sakayama

The CEO of NYC based consultancy TNG/Earthling, Bob Sakayama has managed the search performance strategies of this and thousands of other websites. He specializes in large & multi-site systems where search is critical, and has long been a leader in the remediation of Google penalties - see Google-Penalty.com. He's been quoted in Forbes, addressed seo gatherings as guest speaker, called as an expert witness, and given rare interviews. He created Protocol, the first search enabled content management system, currently running on several thousand websites. He serves both very large and very small businesses, along with seo agencies in multiple countries, and investors seeking search risk evaluation.

Updated May 2024

The Proliferation Of AI Tools

Gemini (formerly Bard), Perplexity, Claude 3, GPT4, GPT4o, Copilot, Suno, Udio, Dall E3, Chatsonic/Writesonic...

There are now so many choices - over 300 & growing - that some will eventually fall away as the marketplace culls the weakest performers. And the free versions are getting much better - some, like Claude 3, outperform the paid version of GPT4 in many of our test, which are focused on content generation. Some may succeed by specializing in images (Gemini, Dall E3), or music (Suno, Udio). In a very recent test, Writesonic.com outperforms most others in the creation of long form content, creating both the content & image - check out this post on Google-Penalty.com.

Updated December 2023

Developing Useful Prompts For Writing Content

I've tested a good number of chatbots for writing skills - Jasper, BingChat, Bard, On-Page.ai & others, but my clear choice is OpenAI's GPT4. It provides the most sophisticated language and responds really well to iterative prompts, or criticisms, where a followup instruction requests correction or a rewrite. But it's way more than a chatbot. It can write code. It can interpret data. This post is focused on some effective techniques to help you use these capabilities for content creation to get the best results.

GPT4 responds like a chatbot, so it will answer questions and many experts train newbies by learning how to ask good questions. My problem with this is that answers to questions are rarely in the form of usable content. But they may lead you there or provide clues to include in more productive prompts. The key to getting good results is familiarization through experimentation. Write prompts as if you are giving a writing assignment to a writer and then followup afterwards with iterative prompts to improve the results.

Start developing your prompts by clearly defining the topic while providing the most important information within the first prompt. Keep it relatively simple - loading the 1st prompt with too much information or too many instructions can overload the generator and it won't remember them all. That said it's impressive how much it can retain from prompts. But if you have a lot of instructions iterate through them in response to initial output.

The AI is trained to give short responses unless given instructions to go deeper. Use terms like "comprehensive", "full length", "detailed", "include specific examples", "explain in detail", etc. to trigger more informative content in the original prompt. Increasing the number of words or characters or paragraphs does not lead to better information.The best results come from subsequent corrective prompts, and sometimes by starting over until the first pass is acceptably on target. Instruct the AI as you would when asking a writer for another draft. When the generator pauses, use the "continue" prompt to get more out of the AI - something that I always do. Most in depth content requires many corrective prompts - (they might all be "continue"). The "continue" prompt is way to coax the AI to output more of what it knows - a way to make the AI dig deeper.

Improve the quality. After the AI generates an acceptable version, ask it to improve on it - with more detail, more examples, background, etc. Request additional versions and chose one or manually pull from them and assemble the best from each. Use the AI to improve its own output.

Example

Here's an example starting from a simple prompt that includes a detailed list of requirements that the AI was able to remember and successfully execute.

Example prompt:

"Write a comprehensive article on the films directed by Steven Soderbergh. Include detailed plot summaries, box office successes & failures, audience reactions, main actors, studio involved, production costs, etc. Include specific examples of critic reviews and any comments made by the director regarding each film."

I used 7 additional prompts of "continue" to draw out more info each time the generator paused. The writer generated decent content that definitely needs some manual editing. Note that starting with PROMPT 4 content starts repeating some information. Each section also has a concluding paragraph, so editing is needed before using this.

Note how much shorter the response would have been if left completely to the AI. Making multiple "continue" prompts got a much better though still flawed output. This is not really a "comprehensive article" as requested. I would suggest running more queries for additional background and commentary on Soderbergh's work & combine them with selected edits from this first draft.

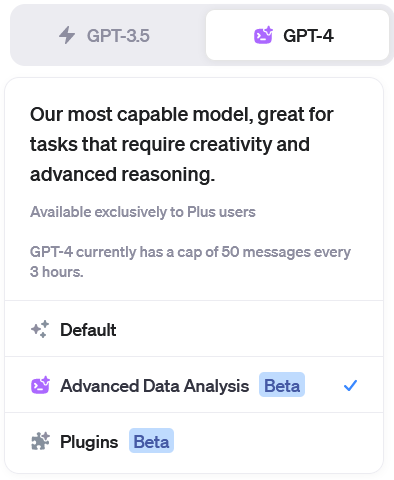

"Advanced Data Analysis" (formerly "Code Interpreter")

GPT4 comes with some very powerful utilities that improve productivity - by bringing data into the picture. You can upload a list of items and prompt the AI on using it.

In the above example (films by Steven Soderbergh), a better technique to avoid the duplication of information is to first make a list of all films as text or csv file (AI can do this for you). Then use the "Advanced Data Analysis" (formerly "Code Interpreter") feature. You can turn this feature on from the 3 dots, lower left -> settings & beta -> advanced data analysis. You can then drag the data file into the "send a message" field then write the first prompt:

"Write a comprehensive article using the uploaded films directed by Steven Soderbergh. Include detailed plot summaries, box office successes & failures, audience reactions, main actors, studio involved, production costs, etc. Include specific examples of critic reviews and any comments made by the director regarding each film."

Scaling Content

This last example might be simplified by using 2 data sets: "movies" & "information items".

Using an optimized prompt along with two or more data sets, you can scale the content for any other set of films, directors, etc. This is actually a big deal. Content at scale.Optimizing existing content

One of the superpowers of this technique for SEO is its ability to optimize content utilizing a list of the important keywords/entities. After uploading the entities, you can post the content and prompt together. I've been impressed at how well GPT4 can do this task. Still it pays to always improve your prompts - this one was developed to address simple problems the AI was exhibiting. It also provides a confirming list of the entities it successfully embedded, and a list not used. I always check this manually but so far GPT4 has provided accurate info when using this prompt:

[Original content] (post the text only version, above the main prompt)

"Create the appropriate contexts if needed and add as many of the uploaded entities as possible to the above text, preserving the original as much as possible so that important words are not lost. VERY IMPORTANT: OK to alter the text, add context or content provided you do not truncate or shorten the original. Make sure to preserve the full original text as much as possible INCLUDING all titles & subtitles. When finished, show a list of the entities that you added as well as those you were unable to add, one per line."

If you have a list of entities not used in the first pass, use it. It is possible to get a very large list of entities into content by repeating the entire process with updated lists. In most cases GPT4 does an excellent job at creating both the appropriate contexts and providing a comfortable read.

When writing content, there are times where you will likely need multiple "continue" prompts, especially if the last output is incomplete or too short. On some topics you'll be surprised at how many "continue" prompts it takes before there's any hint of a problem. Also try "more" - this prompt can get good results but it's prone to change the subject.

Always proofread generated content carefully. If you find any hallucinations alter your prompt to require only provable facts.Confirm facts & claims before you publish.

Writing content is just one of the tasks GPT4 is capable of. Check this out for way more impressive examples of its capabilities (NB: Code Interpreter is now Advanced Data Analysis):

https://www.youtube.com/watch?v=O8GUH0_htRM

AI Detection

I noticed that the early AI writers created content that sometimes got sites penalized and wrote about it here. Google is capable of detecting overly generic content and harming the ranks of sites using it. Their "helpful content" algorithm update (August 2022) was specifically designed to address this. The fix was to humanize the content by adding more information directly tying the content to the specific site - something that is very likely warranted if the content generated is overly generalized or lacking supporting details.

There are now many AI detection tools to help you avoid using content that is clearly AI generated but GPT4 is much less likely to create this problem. I still run all content through the AI detection feature in on-page.ai but other tools are available for this test. OpenAI (creators of GPT4) shut down their detector due to ‘low rate of accuracy’ - not sure what to make of this. If you're creating content for an important site, I suggest you run the text through a couple of these detectors for peace of mind. That said I have seen signs of obviously AI written content on high ranking urls, sometimes with giveaway embedded semantics like "As an AI language model" or "knowledge cutoff" or "can't complete this prompt" or "As of my knowledge cutoff in September 2021", etc.

AI Detection Tools

https://on-page.ai/ (subscription)

https://copyleaks.com/ai-content-detector

https://www.zerogpt.com/

https://sapling.ai/ai-content-detector

https://writer.com/ai-content-detector/

Paraphrasers

https://quillbot.com/

https://www.paraphraser.io/

https://www.editpad.org/tool/paraphra...

https://www.gptminus1.com/

This area is unfinished.

Longform & Code Generation

You can upload multiple files. Using data files: "topics" & "entities" & "layout" & "elements"

"topics" [costs, labor, benefits, source of funding, long range projections, website, marketing plan...]

"entities" [manufacturer, costs, business, market, retail, wholesale, distributor...]

"layout" [conditions for float, size, position...]

"elements" [images, videos, pdfs, links, containers, colors...]

(Upload the lists "topics" & "entities & "layout"")

"Write and style a longform post that covers in detail each item in the topics data, including detailed background information specific to each 'topic'. Create an html document using the rules from 'layout' for all 'elements'. When finished, show a list of the 'entities' used as well as those not used, one per line, and provide downloadable alternative html."

Updated 26 April 2023

AI in Search Engine Optimization: Benefits, Drawbacks, and Cautions

As we have seen, AI has significantly impacted the SEO landscape, but striking a balance between AI-generated content and human expertise remains crucial for long-term success. Artificial Intelligence (AI) has revolutionized several industries, and search engine optimization (SEO) is no exception. This update will examine the effective use of AI in the world of SEO, exploring the benefits and drawbacks associated with its application. We will also discuss the importance of AI detection and how it works to ensure ethical and fair use of AI-generated content in the digital landscape.

Benefits of AI in SEO

Keyword research and analysis: AI-powered tools can analyze vast amounts of data and provide valuable insights into keyword trends, search intent, and user behavior. This allows SEO professionals to fine-tune their keyword strategies and optimize their content for better visibility and search rankings.

Content optimization: AI can help in improving content by suggesting optimal content structures, headers, and meta tags. It can also analyze user-generated content to identify popular topics and trends, allowing content creators to better address their target audience's needs and interests.

Personalization: AI algorithms can track user behavior, preferences, and location data to deliver a personalized search experience. This helps businesses to target specific demographics and create more relevant, engaging content for their audience.

Voice search optimization: As voice search becomes increasingly popular, AI-powered systems can help optimize content for voice-based queries, improving the chances of being found by users who prefer speaking their queries instead of typing them.

Drawbacks of AI in SEO

Over-optimization: Relying solely on AI-generated content can lead to over-optimization, where content becomes too focused on specific keywords and phrases. This can reduce the natural flow of the text and potentially harm user experience and engagement.

Ethical concerns: The use of AI-generated content raises ethical concerns, especially when it comes to plagiarism or passing off computer-generated work as original human-authored content.

Lack of creativity and human touch: Although AI can generate content, it often lacks the creativity and emotional connection that human-authored content possesses. This may result in content that is technically well-optimized, but fails to resonate with the target audience.

Cautionary Aspects of Using Automated Content

Quality and relevance: AI-generated content may not always deliver the quality and relevance that human-authored content can provide. Ensuring content is engaging and valuable to users should always be a priority over purely optimizing for search engines.

Unintended consequences: Relying too heavily on AI-generated content can lead to the creation of low-quality or even misleading information, which can harm a brand's reputation and trustworthiness.

The Importance of AI Detection

As AI-generated content becomes more prevalent, it is crucial to develop AI detection methods to differentiate between human-authored content and machine-generated content. AI detection can help:

Maintain the integrity of search results: By identifying and potentially penalizing AI-generated content that does not adhere to ethical guidelines or provide genuine value to users, search engines can maintain a higher quality of search results.

Encourage ethical use of AI: AI detection can serve as a deterrent for those attempting to exploit AI-generated content for malicious purposes or to manipulate search rankings unethically.

AI has undoubtedly transformed the world of SEO, offering numerous benefits and opportunities for businesses to improve their online presence. However, it is essential to balance the use of AI with the human touch, ensuring that content remains engaging, relevant, and valuable to users. By developing and implementing AI detection methods, we can promote the ethical and responsible use of AI-generated content in the digital landscape, maintaining the integrity of search results and fostering a fair, competitive environment for all.

Updated 10 October 2022

AI Content Triggers Penalties

As predicted earlier this year, there are now a good number of SEO products using artificial intelligence to assist in ranking websites. These tools can reveal information and competently write English language content. The most popular is probably Jasper, the writing tool targeting SEOs but whose actual market is much larger because it's basically a content generator for any topic. The most sophisticated SEO tool is on-page.ai which uses Google's api to reveal detailed information regarding improving semantic optimization, along with generating readable text. These tools can very quickly create original content based on seeded topics and keywords that is good enough to cut and paste. The creators of these tools encouraged that because they knew that the AI content was actually original - created by the AI engine, not copied from another source, so it wouldn't be flagged as redundant. And it was fast. Writers could rapidly generate much more content and had a way to become super productive. It was too good to be true. The popularity of these tools and their widespread use as content generators eventually triggered blowback from Google.

The thing about AI generated content is that while factually true, even the most detailed passage is written generically. Google launched its "Helpful Content Update" to detect AI written content and harmed the ranks of sites relying on it. From the penalized sites we've seen, the issue can be remediated by avoiding generic stats and facts while tightly connecting the content to the purpose of the website. From Google's point of view, facts about certain legal problems and remedies do not help you understand how a specific law firm would handle your need. Really interesting to see Google's AI outing AI generated content.

Our note to SEOs: It's ok use the facts generated by the AI writer in your content, but make those facts useful, helpful, personal, and specifically relevant to the motive behind the search query.

(March 2022)

Some Of Our Old Tools Just Got Deprecated

Google has been training their AI to improve the methods by which it determines semantic relevancy, and it's been impacting the search results for a while. Extremely valuable actionable knowledge can be derived from (1) the information revealed in their 2020 patent application combined with (2) access to their Natural Language Processor & Entities Data. Tools running their AI can now provide us with actionable information, in real time, concerning what directly influences their relevancy decisions. And that information is very specific and delivered in real time. Their algorithm is constantly changing, and those changes are instantly reflected in the output of their AI processes which we can observe.

Optimizing The Semantic Signature of Content With Help From Google

You can now purchase api access to Google's Natural Language Processing (NLP) for AI which analyzes unstructured text using Google machine learning. This, combined with their Entities data, are used to determine the relevancy of content for a given search term. It's been available for a while, and access has quickly become a marketable service - more SEO tools are on their way. The self improving nature of AI means that in principle, the ability to accurately understand content and assign relevance will be getting better with time. We can now be guided by Google's own standards toward the most effective optimization at the moment, creating the ideal semantic signature for a url.

The rapid adoption of artificial intelligence by Google along with recent revelations from their patent applications have created an opportunity to use this technology to improve search performance by addressing in detail a factor that is known to be important. Google's search engine patent applications demonstrate the organizational semantic concepts that provide the foundation of their logic. For example, all sites are assigned Categories or Topics and each may have multiple children categories - eg. /Shopping/Apparel/Casual Apparel. This and the thinking that lies behind the effort to understand semantics are available from their patents of March 2020, which goes into much more detail.

SEOs read these patents and started applying semantic strategies that applied the same organizational structures to their own content. A year later, the results of the first successful experiments using a strict semantic rule set following the principles set forth in Google's patent, started coming in. In February 2021, Koray Tuğberk GÜBÜR posted the details of impressive results by focusing only on the semantic organization of the content, and many others followed. This is the leading edge of a major shift that is underway - powered by AI and signaled by the recent focus on semantic SEO and by data terms of art, like "entity" entering the SEO vernacular.

There are new benchmarks being set for the requirements to fully optimize a site because we can now know much more about what Google is looking for. For example, the self improving semantic model Google uses to determine Categories relies on AI to analyze a site's content. It's still reading the words, but technology has enabled Google to move from matching words and text strings, to efforts to match intent and motive of a search query, greatly improving the quality of their results. So if you're trying to rank a website, the knowledge of how this evolving semantic process works, including what is valued, and how relevancy is determined, is super valuable.

The Importance of Entities

Google's NLP AI is focused on analyzing words to understand the meaning of content and search queries. The output of the tools can reveal what Google sees when it reads content in terms of "entities". These are keywords that have a specific meaning and convey an understanding as a result of usage. For example, the word "party" has a completely different meaning when used in content for an entertainment venue as opposed to content representing a law firm. The number and types of these entities determine the Categories assigned to a url. Just knowing what these entities are is huge. If you purchase the api, you can download all the current Google entities.

But the real power of AI is revealed in the comparisons of the semantic signatures of competing sites. Comparing the data from the top competitors for a given keyword exposes missing entities that can be easily remedied via simple content revision. The tools can reveal all of the most popular entities from the most successful sites. Many of these entities are keywords associated with valuable targets, but some are indications of non semantic relationships that expose intent or motive. These are the connections that permit a site to rank for a keyword not in its content because the AI understands the meaning of the entities from context.

The Value of Situational Awareness

While this new approach is hugely valuable, it is just one element of many that influence search results. The reality is you can handily beat the competition semantically but underperform in the rankings. What we notice when we run existing client sites is that because most of the entities make common sense, and many are revealed with existing tools, we already were using most. And it is common to see excellent semantic optimization being overwhelmed by content from a more powerful brand or one receiving large numbers of authoritative links.

But in the super competitive atmosphere that most businesses have to contend with, these tools provide a welcome edge. They are very likely to expose weaknesses and present opportunities that previously went unseen. And it definitely makes sense to run them on an existing url that has not been semantically optimized. This would automatically include any important target url that has less than 1,000 words. It might also help with diagnostics on an underperforming keyword or url.

The obvious reason to run the AI is to see how Google views your content in comparison to your top competitors while it exposes targeting flaws in a way that is immediately addressable. Knowing what Google considers the most important words is obviously hugely valuable, and Google's AI tells us exactly this.

Putting It To Work

I expect to see a growing number of subscription tools that enable running Google's AI engine on your own content. They're pretty hard to find right now, but they're coming. Because they're paying Google for the apis, these tools can be expensive, but the revelations are impressive. At the simplest level, they can confirm that your site has been assigned the proper category and is recognized for its most important keywords, and if not, point to the fix. In a more sophisticated scaled environment where relevancy is created across large numbers of pages, knowledge of the entities permits you to create intelligent internal link strategies to effectively optimize very competitive terms.

We brought this technology into our practice and have been running it on selected sites. We can see Google's algorithm recognizing the improvement in our content immediately. I don't have the expectation that these improvements will always result in rapid rank advances. Instead, the goal for the enterprise is to always be as optimized as possible - the payoffs come with time as these efforts are recognized.

Some of the best opportunities to apply these new tools lie with legacy pages that already convert but have never really been semantically optimized. Or any highly focused page with comparatively little content. Or addressing a new target in a competitive field. With these new tools, we can quickly act on information directly from Google's algorithm and implement content that perfectly aligns with their relevancy models. This has become the new standard for semantic optimization.

(more coming)